A new generation of contact lenses built with very small circuits and LEDs promises bionic eyesight

The human eye is a perceptual powerhouse. It can see millions of colors, adjust easily to shifting light conditions, and transmit information to the brain at a rate exceeding that of a high-speed Internet connection.

But why stop there?

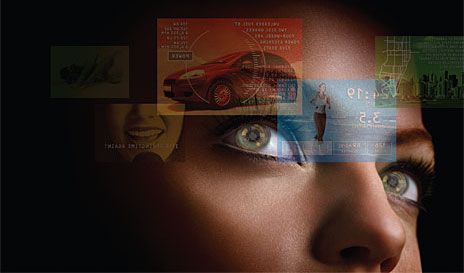

In the Terminator movies, Arnold Schwarzenegger’s character sees the world with data superimposed on his visual field—virtual captions that enhance the cyborg’s scan of a scene. In stories by the science fiction author Vernor Vinge, characters rely on electronic contact lenses, rather than smartphones or brain implants, for seamless access to information that appears right before their eyes.

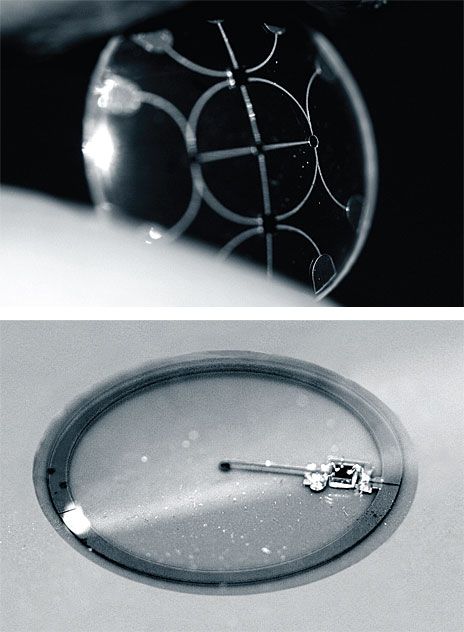

These visions (if I may) might seem far-fetched, but a contact lens with simple built-in electronics is already within reach; in fact, my students and I are already producing such devices in small numbers in my laboratory at the University of Washington, in Seattle [see sidebar, "A Twinkle in the Eye"]. These lenses don’t give us the vision of an eagle or the benefit of running subtitles on our surroundings yet. But we have built a lens with one LED, which we’ve powered wirelessly with RF. What we’ve done so far barely hints at what will soon be possible with this technology.

Conventional contact lenses are polymers formed in specific shapes to correct faulty vision. To turn such a lens into a functional system, we integrate control circuits, communication circuits, and miniature antennas into the lens using custom-built optoelectronic components. Those components will eventually include hundreds of LEDs, which will form images in front of the eye, such as words, charts, and photographs. Much of the hardware is semitransparent so that wearers can navigate their surroundings without crashing into them or becoming disoriented. In all likelihood, a separate, portable device will relay displayable information to the lens’s control circuit, which will operate the optoelectronics in the lens.

These lenses don’t need to be very complex to be useful. Even a lens with a single pixel could aid people with impaired hearing or be incorporated as an indicator into computer games. With more colors and resolution, the repertoire could be expanded to include displaying text, translating speech into captions in real time, or offering visual cues from a navigation system. With basic image processing and Internet access, a contact-lens display could unlock whole new worlds of visual information, unfettered by the constraints of a physical display.

In recent trials, rabbits wore lenses containing metal circuit structures for 20 minutes at a time with no adverse effects.

Seeing the light—LED light—is a reasonable accomplishment. But seeing something useful through the lens is clearly the ultimate goal. Fortunately, the human eye is an extremely sensitive photodetector. At high noon on a cloudless day, lots of light streams through your pupil, and the world appears bright indeed. But the eye doesn’t need all that optical power—it can perceive images with only a few microwatts of optical power passing through its lens. An LCD computer screen is similarly wasteful. It sends out a lot of photons, but only a small fraction of them enter your eye and hit the retina to form an image. But when the display is directly over your cornea, every photon generated by the display helps form the image.

The beauty of this approach is obvious: With the light coming from a lens on your pupil rather than from an external source, you need much less power to form an image. But how to get light from a lens? We’ve considered two basic approaches. One option is to build into the lens a display based on an array of LED pixels; we call this an active display. An alternative is to use passive pixels that merely modulate incoming light rather than producing their own. Basically, they construct an image by changing their color and transparency in reaction to a light source. (They’re similar to LCDs, in which tiny liquid-crystal ”shutters” block or transmit white light through a red, green, or blue filter.) For passive pixels on a functional contact lens, the light source would be the environment. The colors wouldn’t be as precise as with a white-backlit LCD, but the images could be quite sharp and finely resolved.

We’ve mainly pursued the active approach and have produced lenses that can accommodate an 8-by-8 array of LEDs. For now, active pixels are easier to attach to lenses. But using passive pixels would significantly reduce the contact’s overall power needs—if we can figure out how to make the pixels smaller, higher in contrast, and capable of reacting quickly to external signals.

By now you’re probably wondering how a person wearing one of our contact lenses would be able to focus on an image generated on the surface of the eye. After all, a normal and healthy eye cannot focus on objects that are fewer than 10 centimeters from the corneal surface. The LEDs by themselves merely produce a fuzzy splotch of color in the wearer’s field of vision. Somehow the image must be pushed away from the cornea. One way to do that is to employ an array of even smaller lenses placed on the surface of the contact lens. Arrays of such microlenses have been used in the past to focus lasers and, in photolithography, to draw patterns of light on a photoresist. On a contact lens, each pixel or small group of pixels would be assigned to a microlens placed between the eye and the pixels. Spacing a pixel and a microlens 360 micrometers apart would be enough to push back the virtual image and let the eye focus on it easily. To the wearer, the image would seem to hang in space about half a meter away, depending on the microlens.

Another way to make sharp images is to use a scanning microlaser or an array of microlasers. Laser beams diverge much less than LED light does, so they would produce a sharper image. A kind of actuated mirror would scan the beams from a red, a green, and a blue laser to generate an image. The resolution of the image would be limited primarily by the narrowness of the beams, and the lasers would obviously have to be extremely small, which would be a substantial challenge. However, using lasers would ensure that the image is in focus at all times and eliminate the need for microlenses.

Whether we use LEDs or lasers for our display, the area available for optoelectronics on the surface of the contact is really small: roughly 1.2 millimeters in diameter. The display must also be semitransparent, so that wearers can still see their surroundings. Those are tough but not impossible requirements. The LED chips we’ve built so far are 300 µm in diameter, and the light-emitting zone on each chip is a 60-µm-wide ring with a radius of 112 µm. We’re trying to reduce that by an order of magnitude. Our goal is an array of 3600 10-µm-wide pixels spaced 10 µm apart.

No comments:

Post a Comment